Index

It's been about 9 years since the first introduction of the PCI, Vesa and OPTi bus. The introduction of these technologies meant a revolution in bus bandwidth, which was at the time limited to a mere 5MB/s from the ISA-bus. Even though Vesa Local Bus (VLB) got off to a good start it was PCI that emerged victorious. The consequences of this can be felt to this day since we are still using the same PCI technology, introduced 9 years ago, in most of our modern desktop computers. The clock speed of 33MHz, the bus bandwidth of 32-bits and the maximum bandwidth of 133MB/s has stayed the same all this time.

It won't come as a shock to you when I say the hunger for more bus bandwidth has grown dramatically over the last decade. In the early '90s a hard disk could pat him on the back for achieving a transfer rate of 3MB/s or more. Components like 8-channel audio cards, TV-cards, RAID-adapters and Gigabit-Ethernet where nowhere to be found or had an exorbitantly high price tag. Nowadays it is not a big problem to create a situation where the bandwidth of the PCI-bus is the limiting factor. For that reason server and workstation solutions have made the step to faster forms of PCI with a bus bandwidth of 64-bits and clock speeds of 66, 100 or 133MHz. Most of the time these systems are equipped with more than one PCI bus to avoid devices sharing one bus. The benefits are evident: higher bandwidth, lower latencies and fewer conflicts between devices.

Regretfully these faster PCI variants are found to be too expensive for desktop systems. A new version of the current PCI system is in the making; PCI Express. PCI Express is a completely new technology that is based on serial rather than parallel data transfer. The technology will first of all be used to replace the current AGP (Accelerated Graphics Port) port but will in the near future also replace the current PCI-bus.

One of the biggest consumers of bus bandwidth is RAID storage. Even a simple stripe-set of two 10.000rpm Serial-ATA disks can fully use the PCI-bus in some cases. On the forums we regularly hear people say a large RAID array is useless on a normal PCI-bus. They base their opinion on the fact that an array of two or more disks can already generate a higher sequential transfer rate than the PCI-bus can provide. In practice a sequential access pattern is only one of many access patterns. Opening a large file in Photoshop for instance will in the most ideal case indeed be a sequential read (the read-head does not have to move to a different part of the disk). When reading large amounts of data on a disk with heavy fragmentation there will be a greater need for extra head movements. When starting an application of which the files are further apart or simultaneously running multiple disk-intensive tasks the transfer rates will fall far below the theoretic maximum. Depending on the rpm of the drive and the performance of the actuators a change in head-position will take on average 5.5 to 12.0ms or more. Each head-movement will result in a dip in the overall transfer rate. The differences can be so dramatic that even the fastest 15.000rpm hard disks can get a transfer rate of only 4MB/s using a completely random access pattern whilst a sequential transfer rate of 75MB/s is possible.

So the question is: to what extent does the maximum transfer rate of the standard PCI-bus limit the real world performance of desktop- and server- environments. To check if there is a real loss in performance when using fast RAID-arrays combined with low bus-speeds we have tested a pair of SCSI RAID-adapters in different PCI-configurations. The purpose of this test is not only to see if the PCI-bus is a bottleneck for present day servers and workstations, but also to demonstrate if PCI Express is a useful upgrade for future desktops which will in a couple of years have the same storage-performance as do the SCSI RAID-configurations tested by us.

Testbed

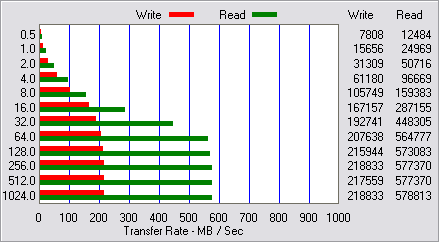

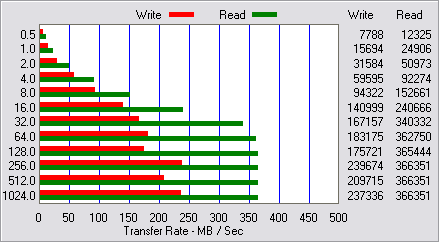

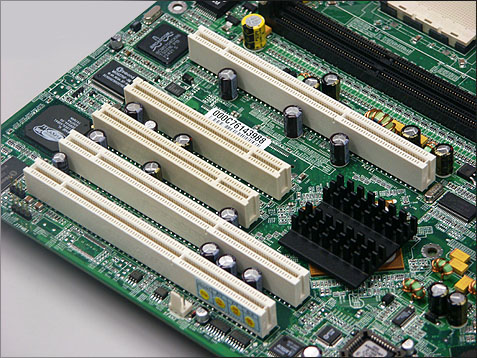

The benchmarks where done on an MSI K8D Master dual Opteron board with two 32-bits 33MHz PCI-slots, three 100MHz PCI-X-slots (divided over two PCI-busses) and a 1.6GHz Opteron 242-processor. Testing was done using a Mylex AcceleRAID 600 and an LSI Logic MegaRAID Elite 1600. The Elite 1600 is an experienced dual channel Ultra160 SCSI-adapter that, in spite of his age of three years, still performs quite well. The adapter supports 64-bits 66MHz PCI and is based on a 100MHz Intel i960RN I/O processor. The Mylex AcceleRAID 600 is a modern dual channel Ultra320 SCSI-adapter which stands apart due to his high integration. The Xircon SCSI-controller and IBM PowerPC 405 I/O processor are all integrated into one chip, allowing for faster communication between these parts. Both cards where equipped with 128MB cache, the MegaRAID used 100MHz SDRAM and the AcceleRAID's flavor was 266MHz DDR SDRAM. The Mylex can set higher transfer rates than the MegaRAID due to its faster processor and memory, the latter is capable of a transfer rate of 136MB/s using write-back caching and adaptive read-ahead. Even though the MSI card is somewhat limited we felt it appropriate to use this card because of its good I/O performance, which is the most important.

The RAID-controllers where connected to four Maxtor Atlas 15k 18,4GB Ultra320 SCSI hard disks flying at 15.000 rotations per minute. These disks can achieve a maximum sequential transfer rate of 75MB/s.

MSI K8D Master mainboard and Mylex AcceleRAID 600 SCSI RAID adapterTo measure the influence of different PCI-bus speeds we tested the AcceleRAID 600 on a 100MHz PCI-X bus and on a PCI-X slot with a Promise FastTrak 100 connected to it, thus bringing the speed back to 66MHz.The MSI MegaRAID Elite 1600 was tested on a 64-bits 66MHz PCI-bus, a 32-bits 33MHz PCI-bus and a 32-bits 33MHz PCI-bus with some more load running in the background. The extra load was produced by an IOMeter transfer rate benchmark running on a Western Digital WD800JB hard disk which was connected to the Promise FastTrak. The FastTrak was placed next to the MegaRAID on the 32-bits 33MHz PCI-bus, thus generating a continuous bus-load of 25MB/s not counting overhead.

The performance of the above mentioned PCI and RAID configurations where measured using ATTO Disk Benchmark, Winbench '99 v2.0 and the StorageMark benchmarks developed in-house. These benchmarks where developed using Intel's IPEAK Storage Performance Toolkit and are based on access patterns resembling real world desktop and workstation applications. New in this review are the IPEAK SPT web- and database server benchmarks which will replace the IOMeter web server benchmarks. In hour opinion the access patterns created by IOMeter are tad to synthetic. This is why we created hour own benchmarks on a simulated Apache and MySQL server. The desktop benchmarks where done in RAID-0 and the server benchmarks in RAID-5, in both cases four disks where used.

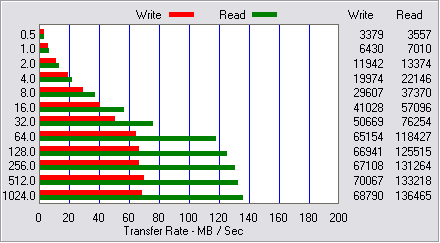

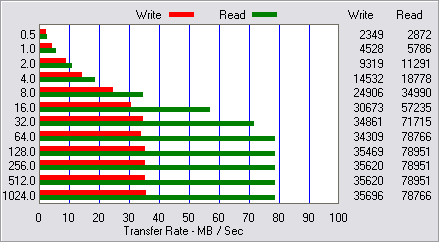

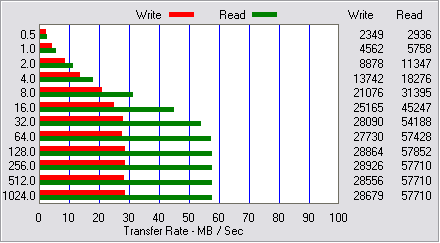

Low-level performance

Desktop performance

For this part of the article we used a subset of the desktop benchmarks as described in the StorageMark 2003 testing methodology (dutch). Also we used a new DVD Copy trace which was recorded while building a DVD in IfoEdit. The tests are based on access patterns of real applications and as such do a better job at representing the performance of RAID than the one-dimensional sequential transfer rate tests.

Office Light and Office Heavy are based on a trace of Business Winstone 2002. This application benchmark simulates a user performing actions in Access, Excel, FrontPage, PowerPoint, Word Microsoft Project '98, Lotus Notes, WinZip, Norton Anti-virus and Netscape Communicator. Some applications where run simultaneously in the mean while switching between programs, as a user would normally do. The Light test was recorded at minimal fragmentation and the Heavy test was recorded with heavy fragmentation and background operations. In both cases there it comes out there are big performance differences when using 64-bits 66MHz PCI and 32-bits 33MHz PCI. The regular bus is somewhere between 24 and 44 percent slower than his faster 64-bits 66MHz brother on which the MegaRAID can use all its resources.

|

| Tweakers.net StorageMark 2003 - Office Light (IOps) |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    1299 1299 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    1282 1282 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    1190 1190 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    935 935 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    826 826 |  |

|

|

| Tweakers.net StorageMark 2003 - Office Heavy (IOps) |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    962 962 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    885 885 |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    877 877 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    813 813 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    746 746 |  |

|

The workstation test is based on VeriTest Content Creation Winstone 2002. This benchmark consists of Adobe Photoshop 6.0.1, Adobe Premiere 6.0, Macromedia Director 8.5, Macromedia Dream weaver UltraDev 4, Windows Media Encoder 7, Netscape Navigator 6 and Sonic Foundry Sound Forge 5.0. Like the office benchmark there where 2 traces; a light version on an un-fragmented disk and a heavy version on a fragmented disk. The Office Heavy test uses a bigger disk area and has a WinAMP play list and a Mozilla download running in the background. During part of this test Nero is running, simulating the burning of a cd from the hard disk at four speed.

The difference in performance between 64-bits 66MHz and 32-bits 33MHz PCI are even bigger in this test compared to the Office-benchmarks. On heavily loaded PCI 32-bits 33MHz PCI-bus the MegaRAID performs a stunning 41 to 71 percent slower. The AcceleRAID 600 does not take a performance hit when using a 66MHz PCI-X bus. Remarkably the AcceleRAID performs better than the MegaRAID in Office- and Workstation light tests, but the MegaRAID outmaneuvers the AcceleRAID in the Office- and Workstation heavy tests. When using 32-bits 33MHz PCI-bus the MegaRAID is easily outperformed by the AcceleRAID on all fronts.

|

| Tweakers.net StorageMark 2003 - Workstation Light (IOps) |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    1099 1099 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    1087 1087 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    943 943 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    641 641 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    552 552 |  |

|

|

| Tweakers.net StorageMark 2003 - Workstation Heavy (IOps) |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    893 893 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    870 870 |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    870 870 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    704 704 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    633 633 |  |

|

Software installation is a heavy test recorded while installing Adobe Photoshop 7, Microsoft Office XP and Corel WordPerfect 2000. To simulate the actions of an impatient user some smaller programs, like Nero, WinZip, Acrobat Reader, Mozilla, Home site and ACDSee where installed. The performance strongly depends on caching performance of both RAID-controller and hard disk. The MegaRAID performs admirably outperforming the AcceleRAID even on heavily loaded 32-bits 33MHz PCI-bus. The differences between a 32-bits 33MHz and a 64-bits 66MHz PCI-bus are, as before, very large. The results of the AcceleRAID prove that there is nothing wrong with the error margin of this benchmark tool, it's negligible.

|

| Tweakers.net StorageMark 2003 - Software Installation (IOps) |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    1282 1282 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    962 962 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    847 847 |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    794 794 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    794 794 |  |

|

Especially for RAID-benchmarks we developed a new DVD-Copy test which was recorded while creating a DVD in IfoEdit. During the test the original DVD-data was read from disk and, after editing, saved by IfoEdit. The access pattern is mostly sequential. Not surprisingly the Mylex AcceleRAID 600 is way ahead of the MegaRAID in this test. The high transfer rates make the differences between free 64-bits 66MHz and a strained legacy PCI-bus very noticeable. In this test we can also see some difference between a 66MHz and a 100MHz PCI-X bus using the AcceleRAID.

|

| Tweakers.net StorageMark 2003 - DVD Copy (IOps) |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    1563 1563 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    1493 1493 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    613 613 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    452 452 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    389 389 |  |

|

The weighted average of the desktop benchmarks proves that the MSI MegaRAID Elite 1600 performs on average 30.4 percent better using a 64-bits 66MHz PCI-bus instead of it's 32-bits 33MHz counterpart. Stealing 25MB/s of bus-bandwidth by the Western Digital hard disk made the difference rise to 50 percent. There is no noticeable difference in performance between 66MHz and 100MHz when the RAID-controller is using the PCI-X bus.

|

| Tweakers.net StorageMark 2003 - Desktop - Weighted average (IOps) |  |

|

| Mylex AcceleRAID 600 |  | PCI-X 100 |  | |  |    1076 1076 |  |

|

| Mylex AcceleRAID 600 |  | PCI64/66 |  | |  |    1062 1062 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI64/66 |  | |  |    964 964 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | |  |    739 739 |  |

|

| LSI MegaRAID Elite 1600 |  | PCI32/33 |  | Load |  |    656 656 |  |

|

Server performance

Conclusion

The benchmarks shown in previous pages clearly show that the limited bandwidth of the legacy PCI bus forms a real bottleneck for heavy RAID systems. A relatively old Ultra160 SCSI RAID-adapter performed on average 32 percent less when measured in I/O performance under desktop workloads.

Who wants to put more bandwidth-consuming devices on his legacy PCI-bus, like graphics cards, video-editing cards or multi-channel audio cards will lose something in the vicinity of 45 percent of performance. Differences are smaller in the server benchmarks, but this is partly due to the usage of RAID-5 instead of RAID-0.

Tweakers who want a big RAID-array are wise to buy a motherboard that is equipped with a 64-bits PCI-bus. Besides the advantage of more bandwidth the PCI-X mainboards also enable you to use separate PCI-busses thus eliminating conflicts between for instance a sound card and a RAID-adapter. Unfortunately 64-bits PCI-slots are mostly found on expensive workstation mainboards with come with a price tag of about 450 dollars. The cheapest solution for a high-bandwidth system is buying a dual Athlon motherboard based on the AMD 760MPX chipset. These boards can be obtained by forking over about 250 dollars, unless you can get one secondhand. Note that this boards have to do without a lot of features present on modern mainboards, like USB2.0, FireWire and on-board Gigabit Ethernet.

The majority of desktop users will find the prices of workstation class mainboards to be too steep. They will just have to wait for PXI-Express to arrive. This technology promises to be a lot more scalable than the current PCI standard which is still stuck on a maximum bandwidth of 133MB/s. Hopefully the availability of bandwidth will be higher than the demand for it, making bandwidth limitations a thing of the past. Until then manufacturers are trying to bring some relief by connecting (Serial) ATA-RAID and Gigabit Ethernet controllers directly with the Southbridge-interconnect taking away the need to use valuable PCI-bandwidth.

We thank both AMD and MSI for making this review possible by providing us with respectively two Opteron processors and the K8D Master dual Opteron mainboard. Finally we would like to thank original article from dutch to english.