Introduction

The release of Intels 64 bit Itanium processor is getting closer and closer, very soon we'll see the pilot release of 733 and 800MHz systems. Intel has been quite secretive about the performance of the chip and the few machines that are at this moment lent to software developers are heavily guarded, not just physically, but also by threats of lawsuits in non-disclosure agreements. But being Tweakers we are naturally curious about the Itanium. What kind of processor is it? Why has it taken Intel such a long time? What are the future plans for it?

The release of Intels 64 bit Itanium processor is getting closer and closer, very soon we'll see the pilot release of 733 and 800MHz systems. Intel has been quite secretive about the performance of the chip and the few machines that are at this moment lent to software developers are heavily guarded, not just physically, but also by threats of lawsuits in non-disclosure agreements. But being Tweakers we are naturally curious about the Itanium. What kind of processor is it? Why has it taken Intel such a long time? What are the future plans for it?

The answers to a number of these questions can now be found on the internet because there are many presentations by Intel and HP to be found. Hardly anyone knows how fast it is though...

Tweakers.net got an opportunity to see an Itanium system up close some time ago and of course we couldn't pass up an opportunity like that. Daniel and Wouter equipped with benchmarksoftware and laptops traveled to a secret location somewhere in Europe to do a number of tests. By reading this sneak preview you'll find answers to questions that were previously only known to a select few software developers, Intel and HP.

Some History:

Some History:

Before heading over to the benchmarks we'll present a few pages of explanation about the cpu itself because the Itanium is not just another turbo-charged Pentium Pro, it's a 64 bit processor that was developed from the ground up. 64 bit processors are nothing new, Digital released the 100MHz Alpha 21064 in 1992, quickly followed by Sun, IBM and HP. Intel was of the opinion that the time wasn't ripe yet for 64 bit processors though, the advantages were much smaller than the disadvantages and additional design costs, it was complete overkill for that time. Intel decided to wait and make its 64 bit processor something nice, something really new.

Six years ago Intel started an ambitious project in cooperation with HP. Management had decided that not only were they going to migrate to 64 bit, it was going to be a completely new architecture as well. In 1994 therefore Intel started its biggest project ever. The goal: designing a new 64 bit architecture, the biggest improvement in cputechnology since the 386. The budget: billions.

Six years ago Intel started an ambitious project in cooperation with HP. Management had decided that not only were they going to migrate to 64 bit, it was going to be a completely new architecture as well. In 1994 therefore Intel started its biggest project ever. The goal: designing a new 64 bit architecture, the biggest improvement in cputechnology since the 386. The budget: billions.

The designers of Intel and HP got unprecedented freedom, all important new ideas to design a fast efficient processor that had surfaced over decades of experience could be applied. Normally the application of revolutionary ideas was virtually impossible because an important goal for a new design was backward compatibility. That's usefull for the user but not for designers. The higher the speeds became and the larger the number of features that had to be incorporated, the more problems surfaced with regards to the increasingly aging IA-32 architecture.

Why a new architecture?

After thirteen years of service in all processors from the 386 to the Pentium 4 the IA-32 architecture is ripe for replacement. This architecture is a CISC (Complex Instruction Set Computing) system, a system under which the processor has a large number of possible instructions. Because the processor can not really perform all these different functions CISC processors often have an internal RISC (Reduced Instruction Set Computing) design, in other words, they try to reduce the number of functions as much as possible. Because the internal design of the processor is completely different from the way it is presented to the software a lot of translation has to be performed. This is not very easy and shows that IA-32 is past its prime.

Some current problems:

Some current problems:

A processor has a number of execution units that are responsible for the raw calculations. Other parts of the chip make sure that instructions and data are being brought in and results taken out. To get the most out of a processor it is imperative to supply the execution units with data and instructions continuously. This doesn't seem difficult but it takes quite a bit of care because although the instructions are neatly arranged in memory it hardly ever happens that the program executes them in exactly that order. This is because some instructions should only be executed if certain conditions have been met and sometimes a choice has to be made between two functions dependent upon the results of the previous one.

To complicate matters further, the processor works with a pipeline. At the front of the pipeline instructions go in and after traveling through the internals of the processor the result is put in the right place. Because the pipeline is long multiple instructions can travel through at the same time. As long as everything is executed in order this poses no problems but if the program gets to a branch in the code it has to wait for the result of instruction A before the processor knows if instruction B or C should be entered into the pipeline. This is a problem because, as stated before, the execution units have to be fed and as long as nothing enters the pipeline these things are just picking their nose.

A solution for this is looking at the other instructions in the cache when such a branch-wait occurs, if there are any instructions waiting to be executed which are independent of the instructions waiting for the result and because of this will not hamper the running of the program then these can be executed in between. This method is referred to as OOO, Out Of Order execution. The advantage is that the execution units can get some work done while waiting for the result of instruction A, of course finding suitable instructions takes time too, in some cases more time than to wait. That's why something else has been thought of; gambling.

For this very effective pieces of hardware and statistical algorithms have been invented, branch predictors, these can guess the answer to the branch with 95% accuracy. In the cases that they're wrong however, it's a small disaster for the processor. Suppose the result of instruction A determines if instruction B or E should be executed. De branch predictor says B en enters A, B, C and D into the pipeline. As soon as A comes out at the other end it becomes clear that it should have been E. At this point the effects of B, C and D have to be undone, something that takes quite a few clock cycles. Only when the pipeline is empty again (flushed) and the values have been restored can E, F and G be entered into the pipeline. The occurrence of mis-predictions like this is something that unfortunately cannot be avoided and with the pipelines increasing in length it is becoming a bigger problem.

Another big disadvantage of IA-32 is that there are too few registers, small pieces of memory on the processor that are even faster than L1 cache. This means the scalability of the processor is limited; for example, it is not possible to efficiently execute more than three instructions per clockcycle on an IA-32 processor. The 32 bit limit is starting to become a problem too, if one wants to work with extremely large files or address very large amounts of memory 32 bit don't cut it anymore. While this is not a big problem at the moment, considering the growth of information technology it won't take too long before it does become a serious bottleneck.

EPIC: the solution

The new architecture had to largely solve these problems, in addition to that it had to be able to last about 25 years and the scalability had to be good. Because of this it was soon decided that the new standard had to be based on EPIC: Explicitly Parallel Instruction set Computing. The ideas behind this originated around 1980 and are centered round executing instructions in parallel and optimizing efficiency in the use of the available execution units. An EPIC architecture is therefore ideal to solve the previously described problems.

It's impossible for a processor to know what instructions influence each other. The compiler, the program that translates programming languages into machine code, however has plenty of time. The E in EPIC stands for Explicit and this means that the program tells the processor which parts can be executed at the same time (in parallel). An EPIC compiler therefore has more responsibilities than an x86 compiler. It's his task to analyze the program code, determine where branches occur and find out what parts can be executed in parallel.

The compiler can't make sure that there will be a continuous stream of code to be processed so EPIC processors will be troubled by branches too. EPIC solves this by means of predication. The processor does not bet on one possibility but processes both possibilities at the same time, as soon as becomes known which one is the right one the other one gets killed. Suppose the result of A determines if instruction B or E should be executed. Instead of betting A, B, C, D, E, F and G are entered into the pipeline. As soon as A comes out it becomes clear that it E was the right answer. At this moment the execution of B, C and D is aborted and the values resulting from E, F and G are entered in the correct places. Had B, C and D been the right ones then this would not have cost any extra clocktick. The enormous penalty of miss-prediction is eliminated this way.

It can also happen that it is temporarily not possible to execute instructions because data from the cache, The RAM or, worst case, the hard disk are necessary. EPIC tries to minimize the wait by means of speculation. This means that the processor is told that he'll be needing certain data soon before he actually needs them, the cpu can then order them to be fetched and put in the cache. A disadvantage of getting these data early is that things can change during the period between getting them and actually using them, that's why the processor will check once more right before executing the instruction.

The end result that comes out of the compiler are instruction bundles in which there are a number of instructions that have previously been checked on the possibility of parallel execution without influencing each other. Apart from that there is some information in there for the processor about the instructions in the bundle and the relations to the previous and next bundle, because bundles are grouped too by the compiler. There can also be additional instructions in a bundle, for instance for speculation. This is a totally different approach than x86 and is best compared to the VLIW (Very Long Instruction Word) as used by Transmeta.

Intels Tahoe architecture

Tahoe, the codename for Intels new ISA (Instruction Set Architecture) based on EPIC has received the official name Intel Architecture 64. In IA-64 the instruction bundles are 128 bit and contain a maximum of three instructions of 41 bit each, as opposed to IA-32 which did not have fixed instruction length. These three instructions use 123 bit so that leaves 5 bit for extra information about the instruction bundle which helps the processor use its resources more efficiently.

Apart from the large step from CISC to EPIC Intel made the leap from 32 to 64 bit. What exactly does this mean? First, the processor can work with values that are 64 bit (8 byte), this means larger numbers and greater accuracy than 32 bit processors. Second, the address bus can be expanded to 64 bit which means the IA-64 architecture can address a maximum of 18 Exabyte of memory. The 4GB that Windows 98 can use and even the 64GB that Windows 2000 Datacenter Server in combination with Pentium III Xeon processors can address pale in comparison.

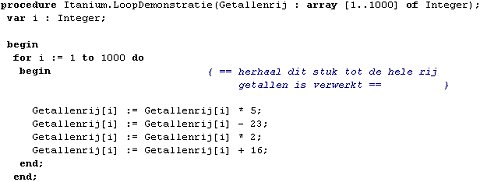

Additionally, software pipelining has been added in IA-64. This feature that is also called register rotation is especially useful for actions that have to be applied to different variables, like in while and for loops that every programmer knows. Instead of applying the same actions on a row of values one by one the processor simply moves the values in the registers one place. In reality the names of the registers are changed and the data is not physically moved but the effect is the same: multiple variables in different stadia of manipulation are present at the same time. This saves a lot of time because the code is smaller and less is needed from memory.

The main principle behind IA-64 is functioning in parallel; an IA-64 processor must be capable of doing a lot of things simultaneously. For this not only do you need a lot of execution units but also a whole load of registers. The IA-32 processor that we know now generally have 32 or fewer of these registers, IA-64 processors will need over 256. Apart from being able to work in parallel another advantage of the many registers lies in the fact that complex calculations with many values can be executed quickly, without needing to fall back on the cache.

The performance of an IA-64 processor is enormously dependent on its compiler. That is a disadvantage because a compiler is very complex to program, it has to take an enormous amount of factors into account to analyze the source code and produce the instruction bundles. Someone writing such a compiler must know the processor architecture inside-out. The compilers for IA-64 have been under development for almost as long as the architecture itself but in spite of all the effort they won't be optimal when released. By means of optimizations and new ideas for smarter compilers the performance of IA-64 processors will be able to increase considerably over the years.

The first IA-64 hardware...

Now that you know what EPIC and IA-64 are the time has come to talk about the very first IA-64 processor, known at Intel under the codename Merced, for the public: Itanium. The physical properties of Itanium aren't impressive at all. Six layers of aluminum with a lowly 25 million transistors produced at 0.18 micron, running at a clockspeed not exceeding 800MHz. Even the Pentium III core seems more advanced with its 28 million transistors and a current top speed of 1GHz, let alone the Pentium 4 with a 42 million transistor count at 1,5GHz, still, for a chip that should have been released in 1997, not bad.

Inside the Itanium core is a lot more interesting, it is capable of processing two 128 bit IA-64 instruction bundles at the same time. This means a maximum of six instructions per clock tick and because some instructions require multiple operations that can be done simultaneously by Itanium, this number of operations per clock tick can rise to 20. For this a small army of execution units and registers is deployed. The 11 execution units and 328 registers can produce a theoretical maximum of 6,4 GFLOPS. This enormous amount of resources enables the Itanium to function parallel as required by the EPIC philosophy. Here is a breakdown of the internal features:

| | Execution units | | 2 | Floating point units | | 4 | Integer units | | 2 | Load-store units | | 3 | Branch units |

|

| | | Registers | | 128 | Multimedia registers | | 128 | Floating point registers (82 bit) | | 64 | Predicate registers | | 8 | Branch registers |

|

|

The Itanium also has on-die cache, consisting of 16KB L1 data, 16KB L1 integer and 96KB L2 cache. This seems rather small for a processor with such features but is amply compensated for by Intel by using L3 cache. 2 or 4MB can be placed on the processor cartridge, connected to the core with a 128 bit bus. Because the cache runs at full speed, total bandwidth to the processor becomes 11,9GB/s for the 800MHz version.

Naturally the Itanium had to be reliable too, ECC has been applied to virtually every internal processor bus, this enables the identification and correction of errors without the need for a reboot. Errors can be logged too and the processor is capable of recognizing errors in the rest of the system and correct or isolate them. The Itanium does not rely on the motherboard to ensure a stable voltage either. On the side of the big black cartridge that houses the core, an Intel designed voltage regulator, even bigger than the processor itself, should be connected.

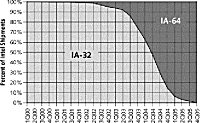

Then there's the problem of old software, although Itanium is a completely new design Intels customers will want to use software that hasn't been ported yet to IA-64 every now and then. To make this possible the Itanium has a hardware decoder for IA-32 that's 100% compatible with current software including MMX and SSE2. Of course the EPIC core can't do anything with these instructions so everything is translated so that the Itanium can execute it and the old software can understand the output.

The chipset the Itanium uses is the Intel 460GX, capable of running with a maximum of four Itanium processors on a dual pumped bus at 133MHz (effectively 266MHz). The chipset can address a maximum of 64GB of memory with a choice between PC100 SDRAM and PC1600 DDR SDRAM. An integrated ethernet card and AGP 4x are optional. For bigger stuff you'll have to go to different companies like NEC, IBM, HP and Compaq, they are working on 16- and 32-way Itanium chipsets. For such a 32-way system you'll have to break the piggybank btw, an 800MHz Itanium with 4MB L3 cache will cost over 4000 dollars. It's clear the Itanium is absolutely not a desktop processor  .

.

...and the future

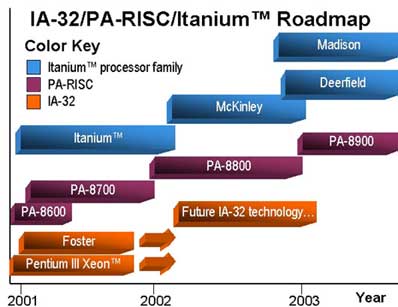

The Itanium is of course the very first IA-64 processor, designed, amongst others, to develop software on and create experience with designing chips that make use of the new architecture. And Itanium is useful to test motherboards and chipsets. To put it bluntly: the Itanium is a try-out. Intel seems to think likewise, they expect to sell at most a few dozen, also because the applications for the processor will be very limited as a result of the high price and the lack of software. The road from IA-32 to IA-64 will be long and there's just scraps of information about it.

The first IA-64 processor that will have serious applications will be the McKinley, it will succeed Itanium in 2002. McKinley will have a 10-stage pipeline, 3 more than the Itanium. This should enable it to reach 1GHz at its release at the end of 2001, making it about twice as fast as the Itanium. McKinley will, like Foster, the server version of Pentium 4, make use of the i870 chipset with support for DDR SDRAM and RDRAM. By that time there will probably have been enough software development on the Itanium but it's questionable whether costs will drop. Yields won't improve much because Intel wants to integrate 4MB L3 cache, consisting of nearly 300 million transistors, on die.

To lower costs Intel wants to migrate to the cheaper .13 micron production process and end 2002 the first IA-64 processor will take this road. This chip is codenamed Madison and is the successor to McKinley. The smaller die size however is not the only development that will take place during this time, Intel wants to go to mid end servers/high end workstations by releasing a cheaper version of Madison. This processor, of which we only know the codename Deerfield, will be not nearly as fast but will have a price tag that is much more acceptable.

The competition:

The competition:

As a side note to this article something about x86-64, AMD's answer to IA-64. Both architectures are 64 bit but as opposed to Intel AMD has not gone through the trouble of making a new design. Instead, their existing architecture has been extended with a number of 64 bit tricks. The first x86-64 processor will be the Sledgehammer that will be introduced in the beginning of 2002. With Clawhammer, a cheaper version of Sledgehammer, Amd targets the consumer right at the launch of the architecture. The advantage of AMD's approach is that it costs a lot less money and offers much better support for existing software; disadvantage is that the architecture is still based on the aging x86.

As a side note to this article something about x86-64, AMD's answer to IA-64. Both architectures are 64 bit but as opposed to Intel AMD has not gone through the trouble of making a new design. Instead, their existing architecture has been extended with a number of 64 bit tricks. The first x86-64 processor will be the Sledgehammer that will be introduced in the beginning of 2002. With Clawhammer, a cheaper version of Sledgehammer, Amd targets the consumer right at the launch of the architecture. The advantage of AMD's approach is that it costs a lot less money and offers much better support for existing software; disadvantage is that the architecture is still based on the aging x86.

Testing the Itanium

When we heard we could preview the Itanium we were glad naturally but soon a number of important questions arose. We were about the first to be in this situation and didn't really know how to go about it. After intensive sleuthing across the world, from vague Japanese sites to Intels Developer Forum and emails to a number of big names like Paul DeMone it became clear that there wasn't a single piece of IA-64 software for Itanium to be found. Intels V-Tune 5.0 compiler with support for Itanium and Pentium 4 was only dealt out to a select few and beta versions of Microsoft's SQL Server 64 were not exactly littering the streets either. There are of course IA-64 Linux distributions to be had but because the system was already running under Whistler that would have been a bit tricky.

The advice we got was to focus on x86 performance because, especially in the beginning with the scarcity of IA-64 software, this would be almost as important as the performance in IA-64 mode. The benchmarks we took along will for this reason look quite familiar. On the one side that's nice because it gives you lots of comparable material, on the other hand it's too bad if you can't get a good impression of the performance doing what it was built to do: IA-64.

The test system had the following configuration:

| | Test system | | Processor | Intel Itanium 667MHz | | Motherboard | Intel 460GX chipset, dual Socket M | | Memory | 512MB PC100 SDRAM | | Video card | ATi Rage 128 | | Storage | 2x 18GB Ultra160 SCSI | | Software | Whistler Advanced Server 64 bit (Beta 1, Build 2296) |

|

Unfortunately we couldn't take any pictures of the test system because the design and the ubiquitous serial numbers would betray whose system it was and this person would get in trouble. I CAN tell you that the system is blunt. It takes two strong men to lift the case and the average vacuum cleaner makes less noise than this system and that is not a joke. All in all very impressive but you want to see the benchmarks.

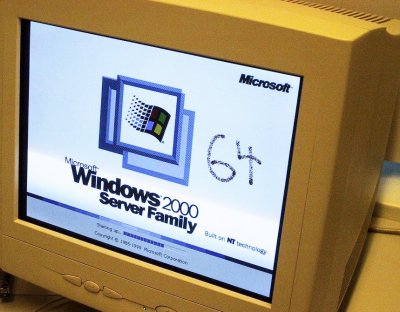

Whistler wouldn't cooperate too much, we don't know if it was because of Whistler or because of the exotic Itanium hardware but many of the standard benchmark suites wouldn't run. Sisoft Sandra 2000 refused to install, Sysmark 2000 and WinBench99 same story. 3DMark 2000 and Quake 3 refused to work because the video card wasn't DirectX 7 compatible. There we were, a pile of software to make the most of our limited time but right away this uncooperative attitude of the system. We couldn't even make a WPCUID screenshot so this will have to do:

Benchmarks: Rc5, Chess & Stream

Luckily there are more benchmarks than the ones you see everywhere. Like the distributed.net client, better known as the cow. Luckily this one would work on the system although the processor wasn't recognized obviously. After the automatic selection of the fastest core we started the benchmark with anticipation. A few seconds later our jaws were on the floor because the Itanium didn't even make 96 kkeys/s, a score that is even beatable for 486's! The Rc5 cores of the client are naturally heavily optimized for real x86 processors which basically makes a good score unlikely but this bad wasn't expected by anyone.

As second test we have Tom Kerrigan's Simple Chess Program, a small program explicitly written to stress the processors branch predictors to the limit. The tool does this by a simulation of a game of chess. After calculating a number of moves three times the average speed of the processor in MIPS is produced. The EPIC hardware would theoretically be capable of outperforming IA-32 processors on this kind of activities but the x86 - IA-64 converter didn't do the job very well. The score of the Itanium would make you cry here too, even the Pentium 100 outperformed it while the 1,5GHz Pentium 4 was 20 times as fast:

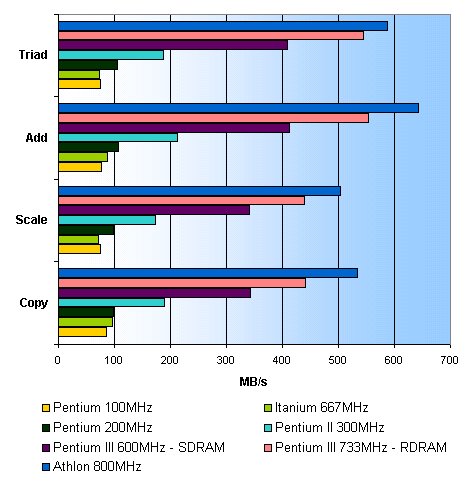

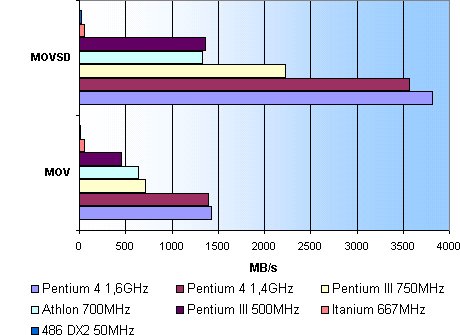

Before we go over to more real-life benchmarks we have one more synthetic, Stream, a program that measures the memory bandwidth while doing certain operations. We don't know if the difference is attributable to the motherboard or the processor but the Itanium was relatively faster in bandwidth. Instead of floating around the level of a Pentium 75 it neared the performance of a Pentium 200  :

:

Benchmarks: FlaskMPEG & TestCPU

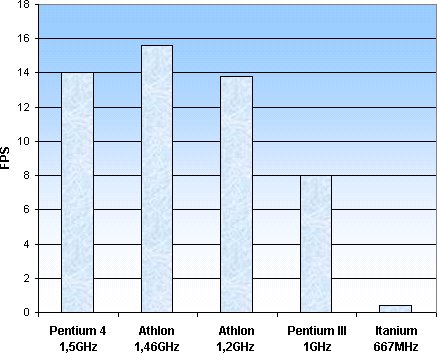

The Itanium doesn't really do too good but there's still hope, maybe it can do x86 floating point calculations fast. To look at that point we'll use the results of FlaskMPEG. Flask is a program to compress video-images and that is, as you will understand, heavy floating-point activity. As input we used a .vob file, ripped from the movie 'The Matrix'. Tom's Hardware was friendly enough to give us some comparison material. To keep a level playing field we used the same settings:

| | FlaskMPEG setup | | Codec | DivX 3.11 alpha, Fast-Motion | | Resolution | 720 x 480, 29.97 FPS | | Data rate | 910 kbps, keyframe every 10 seconds | | Audio | Not processed |

|

High Quality, x87 optimized:

High Quality, x87 optimized:

High Quality, SSE2/3DNow! optimized:

High Quality, SSE2/3DNow! optimized:

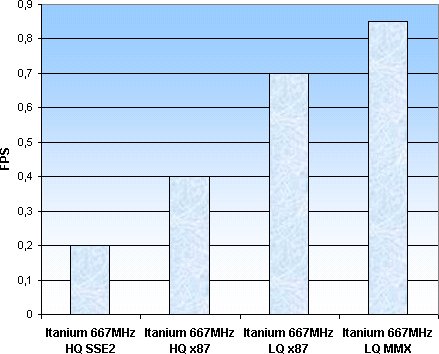

Itanium scores compared:

Itanium scores compared:

It is almost superfluous to comment on this because it's clear that the Itanium is lagging severely. The last graph gives some extra information, it shows that SSE2 emulation on the Itanium only makes things worse. That's strange because op the Pentium 4 these 144 additional instructions deliver quite an increase in speed. An explanation for this could be that the translation hardware is having a hard time with the 'special' instructions that are part of IA-32 but that doesn't seem right because the use of MMX does give a decent increase in percentages. The Itanium also has the possibility, apart from translating SSE2, to use native IA-64 SSE2 instructions so translation shouldn't be a problem.

The last benchmark is the program TestCPU, a diverse test of just about all processor aspects:

Conclusion

Before you start typing your "Intel sucks  " reaction it's advisable to read this last part as well. There are a number of points that can explain the bad performance in our benchmarks.

" reaction it's advisable to read this last part as well. There are a number of points that can explain the bad performance in our benchmarks.

Pre-release hardware:

Pre-release hardware:

The Itanium we tested is, as you know, a 667MHz model. The versions that will be released will run at 733 and 800MHz. That extra clockspeed will not mean that the Itanium suddenly becomes a lot faster but we should assume that the final version could undergo other changes, like a faster revision of the core or a faster IA-32 decoder. It's pretty unlikely that the performance will suddenly increase a lot but it wouldn't be a surprise if it would increase.

Pre-release software:

Pre-release software:

Windows Whistler itself is still beta, but also the IA-64 HAL (Hardware Abstraction Layer) is probably nowhere near finished. Microsoft will probably improve and optimize many things before the release, which will also result in better performance. Remember, we don't know in what part the slowness is caused by the software and in what part by the hardware.

Lack of IA-64 benchmarks:

Lack of IA-64 benchmarks:

We could not run IA-64 software on the system, simply because it was nowhere to be found. It should be remembered that the Itanium was 100% developed for IA-64 and that the IA-32 decoder was only unimportant to Intel. The true power of the cpu lies not in the tests that we conducted but in the applications that are still being developed. Early benchmarks with IA-64 code on Itanium systems have shown that the new architecture is certainly capable of blowing the P4 out of the water with half the clockspeed.

From our own experience we can only talk about Whistler, the only piece of Itanium software that we've been able to check out. The operating system ran smooth, which at least leads us to conclude that the Itanium in IA-64 mode is at least as fast as a Pentium III or Athlon at the same clockspeed.

Itanium as part of a larger whole:

Itanium as part of a larger whole:

The Itanium may not have an ideal performance yet and no ideal price/performance at all but that's never been Intels goal. The first IA-64 processor was made to prove that it is possible and to develop software on it. The Itanium is no more than a first try. The second generation, McKinley will also live its life in extremely expensive database clusters. Not until 3 or 4 years from now will the successors to the Itanium enter the homes and that is enough time to improve the IA-32 performance. The introduction of the new 64 bit EPIC architecture is without a doubt the biggest and longest Intel project ever and the Itanium is just a first start for what awaits us.

Conclusion:

Conclusion:

The IA-64 architecture is promising because of the revolutionary EPIC features that will enable us to build bigger and more powerful computers in the future. However there is still a long way to go and before the bad IA-32 performance of the first chip disappoints you have to look at the bigger picture. You won't be seeing this at home anytime soon after all:

Finally, we would like to thank the person who made this review possible, his name has to be kept secret, but we know who you are! We would like to thank gollem and Majic for translating the article.

The release of Intels 64 bit Itanium processor is getting closer and closer, very soon we'll see the pilot release of 733 and 800MHz systems. Intel has been quite secretive about the performance of the chip and the few machines that are at this moment lent to software developers are heavily guarded, not just physically, but also by threats of lawsuits in non-disclosure agreements. But being Tweakers we are naturally curious about the Itanium. What kind of processor is it? Why has it taken Intel such a long time? What are the future plans for it?

The release of Intels 64 bit Itanium processor is getting closer and closer, very soon we'll see the pilot release of 733 and 800MHz systems. Intel has been quite secretive about the performance of the chip and the few machines that are at this moment lent to software developers are heavily guarded, not just physically, but also by threats of lawsuits in non-disclosure agreements. But being Tweakers we are naturally curious about the Itanium. What kind of processor is it? Why has it taken Intel such a long time? What are the future plans for it?![]() Some History:

Some History: Six years ago Intel started an ambitious project in cooperation with HP. Management had decided that not only were they going to migrate to 64 bit, it was going to be a completely new architecture as well. In 1994 therefore Intel started its biggest project ever. The goal: designing a new 64 bit architecture, the biggest improvement in cputechnology since the 386. The budget: billions.

Six years ago Intel started an ambitious project in cooperation with HP. Management had decided that not only were they going to migrate to 64 bit, it was going to be a completely new architecture as well. In 1994 therefore Intel started its biggest project ever. The goal: designing a new 64 bit architecture, the biggest improvement in cputechnology since the 386. The budget: billions.